Video intelligence infrastructure examples: a guide

Video intelligence infrastructure examples: a guide

AI product managers and developers building video intelligence platforms face a deceptively hard infrastructure problem. The wrong choice doesn't just slow your pipeline — it compounds: transcoding costs balloon, latency creeps into real-time workflows, and extraction reliability becomes a daily fire drill. These video intelligence infrastructure examples cut through the noise by analyzing five distinct approaches, from compositional virtualization to serverless orchestration to edge-first processing, so you can match the right architecture to your actual workload before you commit.

Table of Contents

- Key criteria for evaluating video intelligence infrastructure

- ION Video: video superintelligence for compositional infrastructure

- VAST DataEngine: serverless real-time video search and AI summarization

- Kognition AI: edge-first, multi-camera video analytics platform

- AWS video intelligence infrastructure: scalable GPU orchestration and lifecycle management

- Perceptron Mk1: cost-efficient, high-performance video analysis model

- Comparing video intelligence infrastructure examples: capabilities and trade-offs

- Making the right infrastructure choice for your video intelligence platform

- Why combining compositional and serverless orchestration defines the future of video intelligence

- Explore TornadoAPI: production-scale video extraction for your platform

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Evaluate key criteria | Consider scalability, real-time processing, cost, data sovereignty, and integration when choosing video intelligence infrastructure. |

| Compositional virtualization | ION Video’s approach avoids duplication by making every video frame addressable with semantic metadata. |

| Serverless orchestration | VAST DataEngine enables scalable, real-time video search by running serverless functions on object storage. |

| Edge analytics benefits | Kognition processes video locally across multiple cameras for low latency and enhanced security insights. |

| Cost-effective AI models | Perceptron Mk1 offers high-performance video analysis at significantly reduced costs compared to competitors. |

Key criteria for evaluating video intelligence infrastructure

Before comparing any specific platform, you need a shared framework. Infrastructure decisions made without clear criteria tend to optimize for the wrong thing — usually whatever the vendor demo made look impressive.

When evaluating any video intelligence infrastructure, these are the dimensions that actually matter in production:

- Scalability under real load: Can the system handle sudden spikes without manual intervention? Queue-based autoscaling and elastic compute are table stakes for AI workloads.

- Real-time processing and latency: Some use cases tolerate batch processing; others don't. Security monitoring and live content workflows require sub-second response. Know which camp you're in.

- Data sovereignty and edge support: Regulated industries and enterprise security deployments often cannot route video through public cloud. Edge-capable infrastructure is not optional for those teams.

- Semantic understanding and metadata enrichment: Raw video is expensive to store and query. Infrastructure that generates embeddings, transcriptions, or scene metadata at ingestion time pays dividends downstream.

- Cost model transparency: GPU compute is not cheap. Understand whether you're paying per minute of video, per API call, per token, or for reserved capacity — and model it against your actual volume.

- Integration with existing workflows: The best infrastructure is the one your team can actually wire into your stack in a week, not six months.

A thorough video infrastructure evaluation framework helps you formalize these criteria before vendor conversations begin.

ION Video: video superintelligence for compositional infrastructure

Most video infrastructure treats a video file as an atomic unit. ION Video rejects that assumption entirely.

ION Video's Video Superintelligence infrastructure, launched January 2026, virtualizes video archives without duplication, enabling frame-accurate semantic orchestration from a single master source using virtualization, semantic understanding, and real-time assembly. The practical implication: you can address any frame, any segment, any semantic moment in your archive without creating derivative files.

Key capabilities of ION Video's approach:

- Frame-level addressability without transcoding or duplication, which eliminates the storage cost spiral common in large media archives

- Semantic metadata embedded directly in the video structure, so queries return contextually relevant results rather than filename matches

- Adaptive video experiences assembled in near real-time from a single master source, supporting personalization and rights-aware delivery

- Native MP4 compatibility preserved, meaning downstream systems don't require format changes

- IP governance at the source, giving rights holders control over how content is assembled and distributed

The compositional model is a genuine architectural shift. If your platform manages large archives and your team is spending engineering cycles on transcoding pipelines and duplicate storage management, this is worth serious evaluation. The video infrastructure build vs buy decision looks very different when virtualization eliminates the build cost of a transcoding layer entirely.

Pro Tip: If your archive exceeds 100TB, calculate your current cost of maintaining derivative files (proxies, clips, thumbnails). That number usually makes the case for compositional infrastructure faster than any benchmark.

VAST DataEngine: serverless real-time video search and AI summarization

VAST DataEngine approaches video intelligence from the data layer up. Rather than treating video as a file to be moved, it treats video as a dataset to be queried.

VAST DataEngine as of January 2026 orchestrates serverless functions on S3-compatible storage for real-time video search, processing video chunks into vector embeddings in VastDB with sub-second retrieval for multimodal queries. The architecture avoids the data movement bottleneck that kills latency in traditional pipelines.

Here is how a typical VAST DataEngine workflow operates:

- Video is ingested and broken into segments, which are processed in parallel by serverless functions without requiring a dedicated compute cluster.

- Each segment generates a vector embedding stored directly in VastDB, enabling similarity search across the entire corpus.

- AI summarization runs concurrently, producing structured metadata alongside the raw embeddings.

- Multimodal queries — natural language, video-to-video similarity, or hybrid — execute against the vector store with sub-second response times.

- Client applications receive results through a standard API, enabling responsive search interfaces without custom caching layers.

The serverless model means you pay for processing time, not idle GPU capacity. For platforms with bursty ingestion patterns — think a podcast platform processing a week's worth of uploads on Monday morning — this is a meaningful cost advantage. Building AI training datasets at scale benefits directly from this kind of architecture, since embedding generation and retrieval happen in the same system.

Pro Tip: Vector embeddings generated at ingestion time dramatically reduce query latency later. If your current architecture generates embeddings on-demand at query time, you're paying a latency tax on every search.

Kognition AI: edge-first, multi-camera video analytics platform

Cloud-first video analytics platforms have a fundamental problem: they require reliable internet connectivity, introduce latency, and create data sovereignty concerns. Kognition AI is built for the environments where those constraints are real.

The Kognition AI platform processes video at the edge in real-time across multiple cameras as of February 2026, fusing data for 24/7 monitoring with zero cloud dependency, supporting any IP camera and VMS integration.

| Capability | Edge processing (Kognition) | Cloud processing |

|---|---|---|

| Internet dependency | None | Required |

| Latency | Milliseconds | Seconds |

| Bandwidth cost | Minimal | High |

| Data sovereignty | On-premise | Provider-dependent |

| Multi-camera fusion | Native | Requires aggregation layer |

| VMS integration | Any IP camera | Platform-specific |

Beyond the table, Kognition's multi-camera data fusion is the capability that separates it from single-camera analytics tools. Fusing feeds from multiple angles improves detection accuracy for complex scenarios like crowd behavior, vehicle tracking across zones, and cross-camera identity correlation. For enterprise security teams, that accuracy difference is operational, not academic.

Key enterprise advantages:

- Unified analytics across people, vehicles, behavioral patterns, and threat indicators

- No dependency on cloud uptime for mission-critical monitoring

- Compliance-friendly architecture for regulated industries

- Scales to large camera deployments without linear bandwidth cost increases

If your platform serves enterprise security or any use case requiring video clipping and live recording at the edge, Kognition's architecture removes the cloud bottleneck entirely.

AWS video intelligence infrastructure: scalable GPU orchestration and lifecycle management

For teams that need to run multiple AI models concurrently at enterprise scale, cloud orchestration with proper infrastructure-as-code is the practical path. AWS provides the building blocks; the architecture is what matters.

An AWS enterprise video intelligence Terraform deployment scales GPU workers from min_size 2 to max_size 20 based on SQS queue depth target of 100 messages, using ECS Fargate with Intelligent Tiering S3 lifecycle transitioning after 30 days as of November 2025.

| Component | Role | Scale trigger |

|---|---|---|

| SQS queue | Job buffering | Queue depth > 100 messages |

| ECS Fargate | Container orchestration | Automatic with queue depth |

| GPU workers | Model inference | min 2, max 20 instances |

| S3 Intelligent Tiering | Storage lifecycle | 30-day transition |

| Redis | Result caching | Fixed capacity |

| PostgreSQL | Metadata management | Fixed capacity |

A well-designed AWS deployment runs transcription, visual detection, and scene understanding concurrently rather than sequentially. That parallelism is the key performance lever. Here is the recommended pipeline sequence:

- Video lands in S3 and triggers an SQS message.

- ECS Fargate scales GPU workers based on queue depth, using Terraform-managed autoscaling policies.

- Transcription, object detection, and scene analysis run in parallel across separate model containers.

- Results are cached in Redis and written to PostgreSQL for downstream querying.

- S3 Intelligent Tiering automatically moves older processed video to cheaper storage tiers after 30 days.

The production-scale extraction pricing decision becomes clearer when you model this architecture against your monthly video volume — GPU autoscaling means you're not paying for peak capacity 24/7.

Perceptron Mk1: cost-efficient, high-performance video analysis model

Infrastructure is not just plumbing. The AI model sitting at the center of your video analysis pipeline is also an infrastructure decision, and the cost difference between model choices is not marginal.

Perceptron Mk1 processes native video at 2 frames per second over a 32K token window, priced at $0.15 per million input tokens and $1.50 per million output tokens, 80-90% cheaper than competitors as of May 2026.

At 2 fps over a 32K token context window, Perceptron Mk1 is designed for detailed temporal understanding rather than snapshot analysis. That matters for use cases like sports highlight detection, instructional video indexing, or any scenario where action unfolds over multiple seconds.

Key considerations for integration:

- Cost at scale: An 80-90% reduction in token cost is not a rounding error. For a platform processing 10,000 hours of video per month, this is the difference between a viable and an unviable unit economics model.

- 32K token window: Larger context means the model can reason across longer video segments without chunking artifacts.

- Drop-in integration: Perceptron Mk1 is designed to slot into existing video intelligence pipelines, not replace them.

- Quality at price: The benchmark performance relative to cost makes it a strong default for any pipeline where analysis quality and video infrastructure cost efficiency are both priorities.

Comparing video intelligence infrastructure examples: capabilities and trade-offs

With five distinct examples on the table, here is how they stack up across the criteria that matter most for product decisions.

| Infrastructure | Deployment | Real-time | Cost model | Edge/cloud | Key differentiator |

|---|---|---|---|---|---|

| ION Video | Cloud/hybrid | Yes | Archive-based | Cloud | Frame-level virtualization, no duplication |

| VAST DataEngine | Cloud | Yes | Serverless/usage | Cloud | Vector embeddings on object storage |

| Kognition AI | Edge | Yes | Enterprise license | Edge | Multi-camera fusion, zero cloud dependency |

| AWS (Terraform) | Cloud | Yes | GPU compute/usage | Cloud | Autoscaling GPU orchestration at enterprise scale |

| Perceptron Mk1 | Cloud API | Near real-time | Per token | Cloud | 80-90% cheaper model inference |

The most important distinction is not cloud vs. edge — it is where your bottleneck actually lives. If your bottleneck is storage and duplication cost, ION Video addresses it directly. If it is search latency, VAST DataEngine is the answer. If it is data sovereignty, Kognition removes the cloud dependency entirely. Matching the infrastructure comparison guide to your actual bottleneck is faster than benchmarking everything.

Making the right infrastructure choice for your video intelligence platform

No single infrastructure wins every scenario. Here is a decision framework based on your platform's actual constraints.

- Data sovereignty is non-negotiable: Choose Kognition AI for edge-first processing with zero cloud dependency and multi-camera fusion.

- Large archive management is your primary cost driver: ION Video's compositional model eliminates transcoding and duplicate storage at scale.

- Real-time search and retrieval is the core product feature: VAST DataEngine's serverless embeddings pipeline delivers sub-second multimodal queries without a dedicated compute layer.

- You need to run multiple AI models concurrently at enterprise scale: AWS with Terraform-managed GPU autoscaling handles the orchestration complexity without manual cluster management.

- Model inference cost is threatening unit economics: Perceptron Mk1 cuts token costs by 80-90% without sacrificing temporal understanding quality.

Most production platforms end up combining two or more of these approaches. A broadcaster might use ION Video for archive management, VAST DataEngine for search, and Perceptron Mk1 for analysis. Parallel processing of transcription, visual analysis, and metadata extraction via Step Functions reduces pipeline latency for broadcasters by handling independent stages concurrently, according to AWS AI Solutions Wiki in March 2026.

Pro Tip: Map your infrastructure choice to your current bottleneck, not your projected one. Premature optimization for scale you haven't reached yet is the most expensive infrastructure mistake teams make.

The video infrastructure decision framework becomes most useful when you've already identified which of these five scenarios matches your platform's primary constraint.

Why combining compositional and serverless orchestration defines the future of video intelligence

Here is an opinion that most infrastructure articles won't give you: the edge vs. cloud debate is a distraction. The real architectural divide is between static file systems and compositional, queryable video infrastructure.

ION Video emphasizes that video superintelligence shifts from static files to compositional infrastructure where every frame is addressable without duplication, governed at source for adaptive experiences as of January 2026. At the same time, VAST Data experts note in January 2026 that building real-time video intelligence requires serverless orchestration directly on object storage to avoid data movement bottlenecks, enabling agentic applications like incident detection.

These two ideas are not competing — they are complementary. Compositional virtualization solves the storage and rights layer. Serverless orchestration solves the compute and retrieval layer. Together, they create an architecture where video is never duplicated, always queryable, and processed only when needed.

The platforms that will win in the next three years are not the ones with the most GPU capacity. They're the ones that have decoupled storage from compute, embedded semantic understanding at ingestion, and built workflows that treat video as a living dataset rather than a file to be moved around. Legacy systems that still think in terms of "ingest, transcode, store, retrieve" are carrying architectural debt that compounds with every terabyte added.

The practical implication for your team: when evaluating new video infrastructure insights, ask whether the system treats video as a static artifact or a queryable, composable resource. That single question filters out more bad choices than any benchmark.

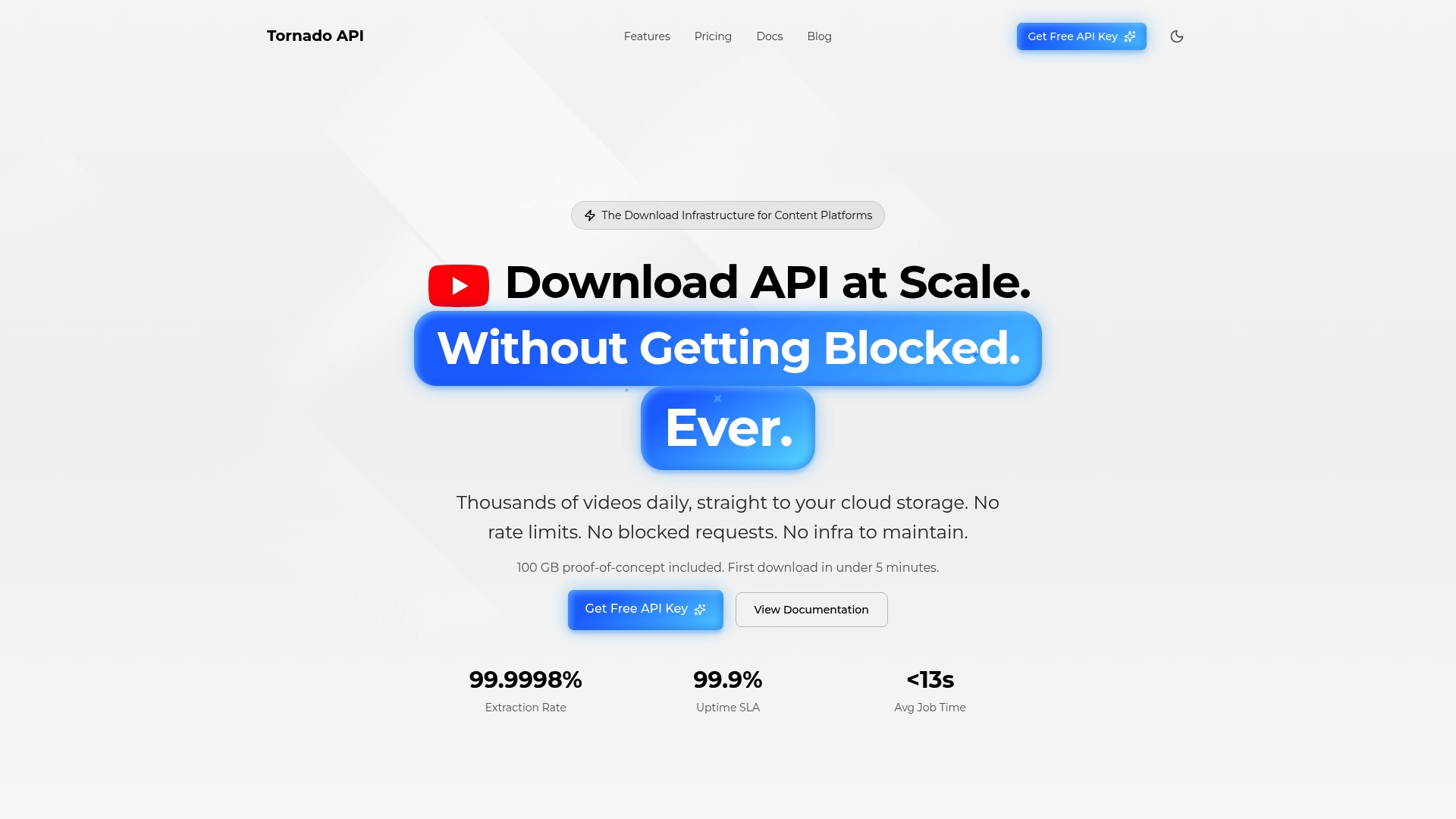

Explore TornadoAPI: production-scale video extraction for your platform

The infrastructure examples above assume one thing: that video arrives in your pipeline reliably, in the right format, from the right sources. That assumption breaks constantly when you're extracting from YouTube, TikTok, Instagram, or Spotify at scale.

TornadoAPI sits between those platforms and your ingestion stack. One API call delivers the file: anti-bot handling, proxy rotation, format normalization, and direct cloud export to S3, R2, GCS, or Azure. We deliver 300 TB per month at 99.998% extraction reliability with a contractual SLA — not a toolbox you manage. Whether you need a video clipping API for highlight extraction or bulk download for dataset construction, production-scale extraction pricing is available for teams from prototype to frontier lab. Book a 30-minute infra-to-infra call at cal.com/velys/30min.

Frequently asked questions

What is video superintelligence infrastructure?

Video superintelligence infrastructure virtualizes video archives to make every frame addressable with semantic metadata, enabling adaptive, real-time orchestration without duplicating files. It replaces the static file model with a compositional one where content is governed and assembled at the source.

How does serverless orchestration benefit video intelligence platforms?

Serverless orchestration allows video processing functions to run directly on object storage without data movement bottlenecks, enabling real-time search and AI summarization at scale. VAST DataEngine demonstrates this with sub-second vector embedding retrieval for multimodal queries as of January 2026.

Why is edge video analytics important for enterprise security?

Edge processing eliminates cloud dependency, reduces latency to milliseconds, and enables multi-camera data fusion that improves detection accuracy. The Kognition AI platform processes video across multiple cameras in real-time with zero cloud dependency as of February 2026, making it viable for regulated and high-security environments.

What are the cost advantages of Perceptron Mk1 for video analysis?

Perceptron Mk1 processes video at 2 fps priced at $0.15 per million input tokens, running 80-90% cheaper than comparable models as of May 2026. For high-volume platforms, that cost reduction directly determines whether large-scale video analysis is economically viable.

How can I scale GPU resources for video intelligence workloads?

GPU resources can be scaled automatically using infrastructure-as-code tools like Terraform, adjusting cluster size based on processing queue depth. An AWS enterprise deployment scales GPU workers from 2 to 20 instances based on SQS queue depth, keeping compute costs proportional to actual workload as of November 2025.